The Trust Gap

Why Traditional Compliance Cannot Verify Modern Systems

There is a structural problem at the center of modern compliance practice, and most organizations manage it by not naming it directly.

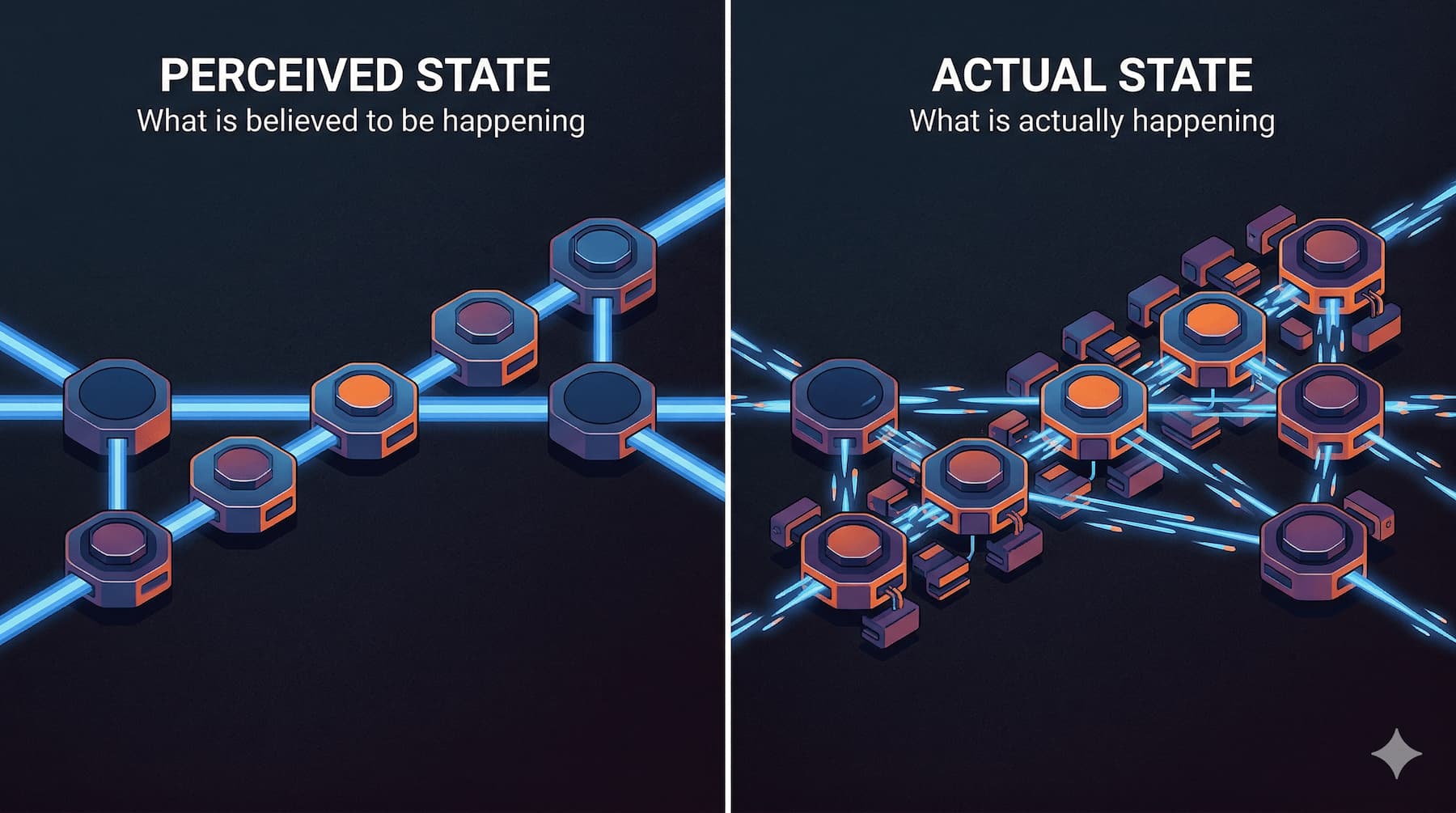

The problem is this: the systems that organizations operate have become faster, more distributed, and more architecturally complex than the compliance frameworks designed to verify them. The result is a widening gap between what systems actually do at runtime and what external parties can independently confirm about that behavior. When direct verification becomes difficult, stakeholders substitute institutional trust signals for continuous proof. Compliance persists, but its epistemic foundation weakens.

This chapter is diagnostic. It examines the structural conditions that produce this verification gap and explains why closing it requires more than refinements to existing audit practice. Understanding the gap is the necessary precondition for understanding why compliance must be reconceived as infrastructure.

How Audit-Based Compliance Evolved

Traditional compliance verification emerged from environments where systems were relatively stable, system state was durable, and the records required for verification were reconstructible after the fact.

In those environments, the fundamental logic of periodic auditing held. An auditor could examine documentation of intended controls, sample operational records that reflected actual system behavior, and form a reasonable judgment about whether the two aligned. The audit cycle introduced a temporal gap between system operation and external verification, but that gap was manageable because system configuration did not change dramatically between review intervals. A system audited in January bore a close family resemblance to the system that had operated in the preceding twelve months.

This is not a criticism of the professionals who developed these frameworks or the organizations that adopted them. The frameworks were rational responses to the verification requirements of their era. The argument here is narrower: those requirements have changed, and the frameworks have not changed with them.

The Verification Limits of Documentation

Before examining what changed, it is worth distinguishing two categories of documentation that compliance practice often conflates.

The first is prospective documentation: policies, procedures, architecture descriptions, and control narratives. Prospective documentation describes how a system is intended to operate. It specifies the controls that should be in place, the procedures that should be followed, the architectural boundaries that should be maintained.

The second is retrospective artifacts: operational records such as logs, deployment histories, system state captures, and control outputs. Retrospective artifacts reflect what a system actually did. They are the evidence of runtime behavior.

In formal auditing practice, both categories constitute evidence, but they serve different functions. Prospective documentation establishes intent. Retrospective artifacts confirm execution. Verification depends on the latter, not the former. A policy document does not demonstrate that a control operated. A log entry does.

Traditional compliance models rely heavily on prospective documentation combined with sampled retrospective artifacts. The implicit assumption is that documentation of intended behavior, supported by selected operational records, is sufficient to support assurance conclusions about actual system behavior.

That assumption is most defensible when systems are stable and when the gap between documented intent and operational reality is narrow. It weakens when systems change faster than documentation can track them, and when the operational records required for verification are incomplete, fragmented, or no longer available at the time of review.

Velocity Mismatch

Modern deployment cycles operate on timescales that have no precedent in traditional compliance design.

A system subject to continuous deployment may receive dozens of configuration changes in a single day. Functional updates, dependency patches, infrastructure adjustments, and security remediations flow through automated pipelines on schedules measured in hours rather than months. The system that undergoes an annual compliance audit is not the same system that was operating twelve months earlier. It is not the same system that was operating last week.

External compliance verification, by contrast, still occurs at discrete intervals. Annual audits, periodic certifications, and scheduled regulatory reviews examine a system at a moment in time and attempt to draw conclusions about an extended period of operation. The temporal gap between continuous system change and periodic external verification is not incidental to how modern compliance works. It is the defining structural feature of the current approach.

This creates a velocity mismatch that compounds over time. The longer the interval between verification events, the greater the divergence between the system that auditors examine and the system that operated during the period under review. Prospective documentation may reflect an earlier architectural state. Retrospective artifacts may be incomplete. The compliance record increasingly describes a version of the system that no longer exists.

It is important to be precise about what this mismatch produces. It does not produce dishonesty. Organizations subject to continuous deployment are not misrepresenting their systems when they submit documentation that was accurate at the time it was written. The problem is structural, not ethical. The compliance record becomes stale not because anyone falsified it but because the system continued to change after the record was created.

System Complexity and the Fragmentation of State

Velocity mismatch is one axis of the verification problem. Architectural complexity is a second, compounding axis.

Modern infrastructure does not operate as a single system whose state can be examined at a single location. It operates as a collection of distributed services, each maintaining its own state, interacting through APIs and message queues, deployed and scaled independently according to runtime demand.

This architectural pattern introduces three specific verification challenges.

The first is fragmented system state. No single location in a distributed architecture reflects the complete operational state of the system. To reconstruct what a system did at a given moment requires assembling evidence from multiple services, each of which may record events at different granularities, in different formats, and with different retention policies. The evidence required for verification exists, if it exists at all, as a distributed artifact that must be assembled retrospectively.

The second challenge is ephemeral compute. Modern cloud infrastructure routinely creates and destroys compute resources on demand. A container that executed a transaction may no longer exist minutes later. The infrastructure component that enforced a control assertion during peak load may have been terminated as load decreased. Relevant system state is not merely distributed; it is actively transient. By the time external verification occurs, the components whose behavior is under review may have left no durable trace.

The third challenge is rapid configuration change through automated pipelines. Continuous delivery systems manage infrastructure configuration as code, applying changes through automated processes that operate faster than human review cycles. The configuration that was in place at the time of a compliance event may differ from the configuration visible at the time of the audit, not because anyone changed it in response to the audit but because the normal operation of the deployment pipeline continued to evolve the system in the interim.

These three characteristics interact. Fragmented state makes evidence assembly difficult. Ephemeral compute makes evidence preservation uncertain. Rapid configuration change makes the relationship between current documentation and historical system behavior increasingly tenuous. Together, they describe an environment where retrospective reconstruction of runtime behavior is structurally challenging even when organizations are operating in good faith and investing significant resources in compliance activity.

Trust Substitution

When direct verification becomes difficult at scale, a substitution occurs. Stakeholders shift from independently confirming system behavior to relying on proxy trust signals: certifications, third-party attestation artifacts, audit reports, procedural assurances, and organizational reputation.

This substitution is rational. No external stakeholder has the resources, access, or technical capacity to continuously verify the runtime behavior of every system they depend on. Trust signals serve as coordination mechanisms that allow complex ecosystems to function without requiring complete independent verification at every point.

Frameworks such as SOC 2 and ISO 27001 operate within this substitution logic. They are sampling-based verification mechanisms. They examine selected controls and selected artifacts over a defined period and produce conclusions about organizational compliance posture. These frameworks are valuable precisely because they provide structured, third-party assessment of at least some aspects of system behavior. They introduce accountability that would otherwise be absent.

But they provide partial assurance, not continuous verification of operational reality. A SOC 2 Type II report covers a defined period and examines a defined scope. It does not represent a continuous observation of system behavior. The controls that were in scope may differ from the controls that are operationally significant for a particular relying party. The period covered may not include the interval relevant to a specific compliance question.

The trust gap is the delta between the assurance that these sampling-based mechanisms provide and the continuous operational reality of systems that change faster than periodic verification can track. It is not a gap created by fraudulent actors or poorly designed frameworks. It is the structural consequence of applying verification mechanisms calibrated for stable, legible systems to infrastructure that is distributed, ephemeral, and continuously evolving.

What the Gap Produces

The consequences of the trust gap are operational, not merely theoretical.

When compliance artifacts describe system state accurately at the time they are produced but quickly diverge from operational reality, organizations carry compliance posture that does not reflect their current risk profile. Regulators and partners relying on those artifacts make decisions based on an increasingly outdated picture of the systems they depend on or oversee.

When evidence required for verification is generated retrospectively from systems whose state is fragmentary or transient, the quality of that evidence degrades. Compliance teams spend significant resources assembling artifacts that may be incomplete, and auditors assess those artifacts knowing that completeness cannot be confirmed.

When trust signals substitute for direct verification, the substitution is often invisible. Neither the organization presenting the signals nor the stakeholders relying on them necessarily recognizes the extent to which assurance rests on institutional credibility rather than demonstrated operational behavior.

These conditions produce a compliance environment that is formally active but epistemically fragile. The artifacts exist. The certifications are current. The processes run on schedule. But the connection between those activities and the runtime behavior of the systems they are meant to address is weaker than it appears.

Distinguishing the Argument

Two clarifications are necessary before proceeding.

The first concerns intent. This chapter's argument is not that compliance practitioners, auditors, or regulated organizations are operating in bad faith. The Trust Gap is structural, not ethical. Organizations investing substantial resources in compliance programs under current frameworks are responding rationally to the requirements placed on them. The problem is that those requirements were designed for different systems operating in different conditions.

The second concerns the scope of the verification problem. This chapter focuses on verification: whether the system was built correctly relative to specified controls, and whether the evidence required to confirm that can be reliably assembled. It does not focus on validation: whether the controls themselves reflect the correct regulatory or policy objectives. Validation failures are a real problem in compliance practice, but they are a different problem. The Trust Gap is a verification problem. Addressing it requires mechanisms that generate and preserve evidence of control operation during system execution, not mechanisms that improve the design of the controls themselves.

The Structural Conditions That Require a Different Approach

The Trust Gap is not a problem that more rigorous documentation practice can solve, because the problem is not a documentation quality problem. It is a structural mismatch between the speed and architectural characteristics of modern digital infrastructure and the verification models applied to it.

More frequent audits reduce the temporal gap but do not eliminate it, and they impose costs that scale with frequency while the underlying structural problem persists between audit events. Better documentation practices improve the accuracy of prospective descriptions of intent but do not close the distance between documented intent and runtime behavior. Larger volumes of operational data provide more material for retrospective analysis but do not resolve the challenge of assembling fragmented, transient evidence into coherent verification artifacts.

The conditions that produce the trust gap point toward a different class of solution: one in which evidence of control operation is generated continuously during system execution rather than assembled retrospectively after the fact. One in which enforcement and attestation artifact generation are embedded in the operational architecture of the system rather than layered over it after deployment. One in which the compliance record is a byproduct of system operation rather than a separate process that attempts to reconstruct what system operation produced.

This is what compliance as infrastructure means. The remainder of this manuscript examines its architecture in detail.

What this chapter has established is the epistemic problem that makes that architecture necessary. Modern digital infrastructure creates a structural Trust Gap between system behavior and the ability of external parties to independently confirm that behavior. When direct verification becomes difficult, trust substitution follows. When trust substitution dominates, compliance posture decouples from operational reality. Addressing that decoupling requires embedding verification capacity within the infrastructure itself - treating compliance not as an overlay applied to systems after they are built, but as a structural property of systems as they operate.

The Trust Gap names the condition. Part III examines the three mechanics through which it accumulates: Documentation Debt, Evidence Latency and Enforcement Drift, and the Oracle Problem. Together they constitute the complete failure analysis on which the architecture chapters rest.