Evidence Latency and Enforcement Drift

Why Compliance Evidence Describes the Past, Not the Present

Section 1: The Temporal Problem in Compliance Verification

Compliance verification is a temporal problem before it is a technical one.

Modern software systems change continuously. Deployment pipelines push code multiple times per day. Infrastructure configurations drift through automated provisioning, dependency updates, and environment-specific overrides. Service dependencies shift as integrations are added, deprecated, or renegotiated. What the system was doing at 09:00 is not necessarily what it is doing at 09:15.

Compliance verification, by contrast, operates on cycles. Audits are scheduled quarterly, annually, or in response to incidents. Evidence packages are assembled at defined review points. Attestations are produced at moments selected for their administrative convenience, not their operational relevance. The verification system is periodic. The operational system is continuous.

This mismatch is not incidental. It is structurally embedded in how compliance programs were designed - for environments where systems changed slowly, where configuration was manual and deliberate, and where the gap between a system's defined state and its operational state could be closed by inspection and attestation.

Those environmental assumptions no longer hold. The gap between verification cadence and system change velocity is the primary source of verification failure in modern infrastructure.

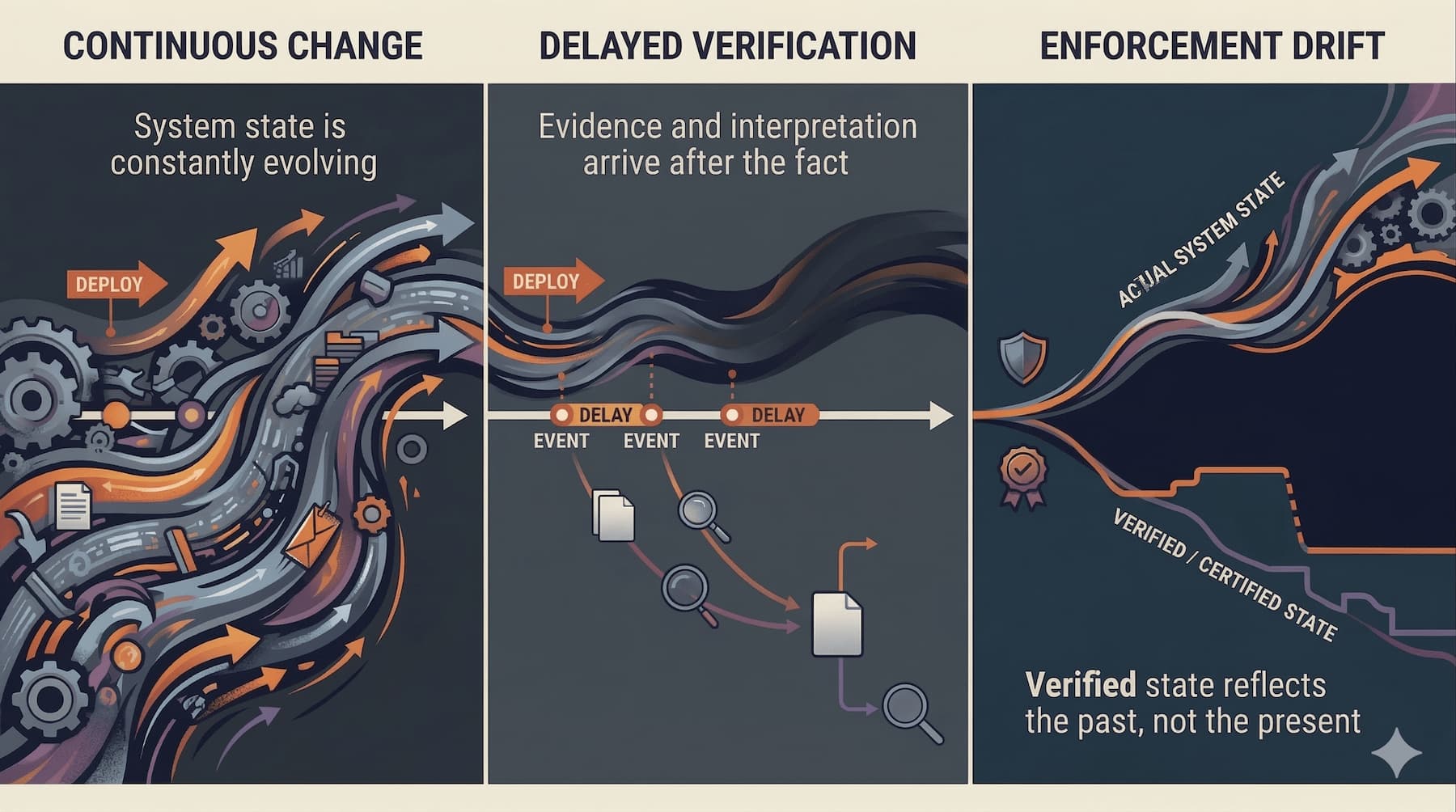

Understanding how that gap produces risk requires examining two distinct dynamics: evidence latency, which describes the delay before verifiable compliance information becomes available, and enforcement drift, which describes the divergence that accumulates in the interval before verification occurs.

Section 2: Evidence Latency

Evidence latency is the time gap between system behavior and the moment when verifiable compliance evidence describing that behavior becomes available for evaluation.

This definition establishes three distinct events: the behavior itself, the generation of evidence describing that behavior, and the availability of that evidence for compliance evaluation. Each transition introduces delay. The transitions are not automatic. Each depends on mechanisms that can fail, lag, or produce outputs of varying reliability.

Evidence latency has two structurally distinct dimensions.

Signal Latency

Signal latency is the delay between the execution of system behavior and the generation or collection of operational signals that describe that behavior. Operational signals include logs, telemetry streams, configuration state snapshots, event traces, and audit records.

Signal latency is the dimension most compliance programs attempt to address through technical investment. Log aggregation pipelines, real-time telemetry systems, and configuration management databases are engineering responses to signal latency. When an organization claims near-real-time visibility into its operational state, it is primarily claiming to have reduced signal latency.

Signal latency, however, is not the primary failure mode. In well-instrumented environments, signals are generated with low latency. Operational telemetry may be available within seconds of a system event. The technical problem of capturing signals at scale is tractable and increasingly well-solved.

Semantic Latency

Semantic latency is the delay between the availability of evidence and the ability of institutions to determine whether that evidence represents compliant or non-compliant behavior.

Semantic latency is structurally different from signal latency. It does not emerge from technical pipeline delays. It emerges from the interpretive process that converts observed system behavior into a compliance judgment.

Several factors produce semantic latency:

Regulatory language ambiguity. Statutory and regulatory obligations are written in natural language, often deliberately flexible to accommodate diverse regulated environments. The same regulatory text may be read differently across institutions, jurisdictions, or enforcement periods. Evidence may be available, but whether it satisfies an ambiguously stated obligation cannot be determined mechanically.

Shifting enforcement interpretation. Regulatory interpretation evolves through enforcement actions, guidance documents, agency communications, and judicial decisions. A behavioral pattern that was acceptable under one interpretive regime may be characterized as non-compliant under a subsequent one - potentially retroactively.

Unresolved factual context. Compliance determinations often require contextual information that extends beyond the primary evidence stream. Whether a configuration deviation constitutes a material control failure may depend on whether the deviation was compensated by other controls, whether it occurred within a defined exception window, or whether it produced any observable operational consequence.

The implication is significant: evidence latency does not disappear when telemetry is real-time. Signal latency and semantic latency are independent. An organization can have complete, immediate signal coverage and still face substantial semantic latency because the interpretive process has not resolved whether the signals it holds demonstrate compliance.

Compliance systems that treat evidence availability as equivalent to compliance determination have collapsed a two-stage process into one. That collapse is an architectural defect.

Section 3: Why Evidence Does Not Immediately Produce Compliance Judgments

The assumption that evidence generates compliance judgments automatically is embedded in many compliance monitoring architectures. Dashboards display control status as green or red. Monitoring systems flag policy violations in real time. These interfaces suggest that compliance is a binary observable property of a system.

That model is incomplete.

A compliance judgment is not a function of evidence alone. It is a function of evidence interpreted against a standard, where both the evidence and the standard are subject to uncertainty.

The Interpretive Gap

Translating regulatory obligations into enforceable operational constraints requires interpretation. Regulatory text defines obligations in general terms. Operational constraints must specify exact conditions, thresholds, configurations, and behaviors. The translation from regulatory obligation to operational constraint is not deterministic. It requires legal judgment, domain expertise, and organizational policy decisions that are themselves contestable.

When evidence is evaluated, it is evaluated against these translated constraints - not directly against regulatory text. The translation layer introduces a layer of policy risk. If the translation was incorrect or incomplete, evidence that satisfies the defined constraint may not satisfy the underlying regulatory obligation, and evidence that fails the defined constraint may still satisfy the regulatory obligation under an alternative interpretation.

Regulatory Ambiguity as a Design Feature

Regulatory ambiguity is not always a drafting failure. In many regulatory environments, ambiguity is deliberate. Prescriptive requirements create brittleness: sophisticated actors comply technically while violating the purpose of the regulation; technological change makes specific requirements obsolete rapidly; different regulated entities require different operational implementations of the same principle.

Principles-based regulation addresses these problems by stating obligations at a level of abstraction that requires interpretation to operationalize. The consequence is that compliance evidence must be interpreted rather than simply checked.

Compliance systems designed for binary evaluation against precise thresholds will underperform in principles-based regulatory environments. The architecture must accommodate interpretive uncertainty as a persistent condition rather than a temporary gap to be closed.

Retroactive Interpretation

A further complication is temporal. Regulatory interpretation can change retroactively. An enforcement action issued today may characterize behavior from two years ago as non-compliant under an interpretive standard that was not clearly established at the time the behavior occurred. Evidence that was treated as demonstrating compliance at the time it was generated may be reclassified when evaluated under a subsequent interpretive regime.

This is not a hypothetical risk. It is a recurring feature of regulatory enforcement in financial services, healthcare, data protection, and critical infrastructure sectors.

The implication for compliance architecture is that evidence must be retained with sufficient context to support retrospective reinterpretation. Generating evidence is necessary but not sufficient. The evidence generation mechanism must preserve the interpretive context in which the evidence was produced - the constraint definition that applied, the policy baseline in effect, and the regulatory interpretation that was operative at the time of observation.

Section 4: The Emergence of Enforcement Drift

Enforcement drift is the divergence between defined compliance constraints and the behavior actually occurring in operational systems over time.

Drift is a dynamic property. It does not exist at a single point in time. It accumulates. Understanding drift requires understanding the mechanism through which it emerges.

Step 1: Continuous System Change. Modern software infrastructure evolves through continuous deployment pipelines, automated provisioning, dependency updates, feature releases, configuration changes, and infrastructure scaling events. The rate of change in production systems has increased substantially over the past decade as organizations have adopted continuous delivery practices, microservice architectures, containerized infrastructure, and cloud-native deployment models.

In this environment, the assumption that a system verified at one point in time will remain in that state until the next verification event is operationally implausible. Systems change between verification cycles - not because of negligence or deliberate evasion, but because change is the operational mode.

Step 2: Delayed Evidence Generation. Evidence describing system changes may not be generated immediately. Signal latency introduces a gap between the occurrence of a configuration change and the availability of evidence documenting that change. In some architectures, configuration state is captured only at scheduled intervals rather than continuously. Change events may not be logged with sufficient granularity to support compliance evaluation.

During this period, the operational system has changed, but the evidence layer has not yet captured the change. The compliance record remains consistent with the prior state.

Step 3: Interpretation Delay. When evidence is generated, it must be interpreted to produce a compliance judgment. As described in the prior section, this process is not instantaneous. Interpretive uncertainty, regulatory ambiguity, and institutional decision-making cycles all introduce latency between evidence availability and compliance determination.

During this period, the system has changed, evidence describing the change has been captured, but the compliance status of the change has not been determined.

Step 4: Accumulated Divergence. The combination of continuous change, delayed evidence generation, and interpretation delay creates a compounding dynamic. Each cycle of system change adds to the potential divergence between defined constraints and operational behavior. Each delay in the evidence and interpretation chain extends the window during which divergence is undetected.

The divergence is not static. It compounds. As subsequent changes occur while prior changes remain unverified, the cumulative distance between the defined compliance state and the operational state grows.

Step 5: Enforcement Drift. By the time compliance verification occurs, the evidence describes historical system states. Enforcement decisions are made against a representation of the system that may no longer correspond to operational reality. This is enforcement drift: the enforcement mechanism is operating against a system model that has moved.

The consequence is not merely administrative inconvenience. Enforcement drift means that the compliance program is certifying historical behavior while current behavior remains unverified. In regulated environments where the relevant risk is current operational behavior - data handling practices, access control configurations, transaction processing logic - enforcing against historical evidence provides only the appearance of assurance.

Enforcement drift is the accumulation of temporal and interpretive gaps, where compliance verification reflects past system behavior while current behavior remains unverified.

Section 5: Drift Baselines

Drift cannot be evaluated without a defined reference baseline. The statement that a system has drifted is meaningful only relative to a specific prior state that the system is supposed to maintain.

The choice of baseline is an architectural decision with significant consequences.

Possible Baseline Types

Internal operational policy definitions. The organization defines its compliance constraints in internal policy documents, control libraries, or configuration standards. Drift is evaluated as deviation from those internal definitions. This baseline is fully within organizational control and can be updated as operational requirements change. Its limitation is that internal policies may themselves drift from regulatory obligations, creating policy-level drift beneath the surface of apparent technical compliance.

Explicit statutory or regulatory obligations. Drift is evaluated directly against the requirements of applicable regulation. This baseline has maximum regulatory relevance but requires translating regulatory language into operational constraints - the translation layer problem described earlier. Direct evaluation against regulatory text is often not practically achievable at the operational level without an intermediate constraint specification.

Contractual obligations. For regulated entities operating under contracts, service agreements, or consent orders, contractual obligations may define the operative compliance standard. Drift evaluation against contractual baselines may differ from regulatory baselines in scope, specificity, and enforceability.

Industry standard practices. Frameworks such as NIST, ISO 27001, or sector-specific control standards define reference baselines independent of specific statutory obligations. Drift measured against these standards may provide defensible evidence of due diligence in enforcement proceedings even where the standard is not itself legally mandated.

Operational Baseline for This Chapter

For purposes of this chapter, enforcement drift is defined relative to the operational constraints implemented within the organization's systems - the constraints that have been translated from regulatory or policy obligations into machine-interpretable rules governing system behavior.

This scoping is deliberate. It focuses the analysis on the verification problem: whether systems are doing what their constraint definitions require, rather than whether constraint definitions are adequate representations of regulatory obligations. The adequacy of constraint translation is a separate architectural concern addressed in the Translation Layer chapter.

Execution Drift and Policy Drift

Drift must be further distinguished by type.

Execution drift is divergence between defined operational constraints and actual system behavior. The policy is correctly defined; the system is not operating in conformance with it. Execution drift is a detection and remediation problem.

Policy drift is divergence between the organization's documented compliance policies and the regulatory obligations those policies are intended to satisfy. The systems may be operating in exact conformance with internal policy, but the policy itself no longer reflects current regulatory requirements. Policy drift is a governance and interpretation problem.

A compliance infrastructure that addresses only execution drift may report consistent compliance while policy drift produces sustained regulatory exposure. Both dimensions must be monitored.

Section 6: Limits of Periodic Verification

Periodic verification was the dominant compliance model for environments where system change was slow, manual, and deliberate. Those environmental assumptions have changed substantially. Understanding why periodic verification fails in modern infrastructure requires analyzing the model's structural constraints.

Verification Frequency and Change Velocity

The fundamental constraint is the relationship between verification frequency and system change velocity. For periodic verification to produce accurate compliance assurance, the system must change slowly enough between verification events that the verified state remains representative of the operational state.

In modern infrastructure, this condition is not satisfied. In continuous delivery environments, hundreds or thousands of change events may occur between scheduled audit cycles. Each change event is a potential divergence point. The aggregate divergence across all change events during a verification interval may be substantial even if each individual change appears minor.

Point-in-Time Certification

Periodic verification produces point-in-time certifications: attestations that the system met defined criteria at a specific moment of observation. The certification is accurate at the moment of observation. Its validity degrades as the system evolves away from the certified state.

Point-in-time certification is adequate when certified states are stable. For operational infrastructure that is continuously modified, point-in-time certification produces a certificate whose accuracy decays continuously from the moment it is issued. The certificate describes a system that no longer exists in the form certified.

Sampling Limitations

Periodic verification typically involves sampling. Auditors review a subset of systems, a subset of configurations, and a subset of transactions to form a judgment about the whole. Sampling is statistically defensible under specific conditions: when the sampled population is homogeneous, when the sampling is representative, and when conditions are stable across the sampling period.

Modern infrastructure environments often violate all three conditions. Systems are heterogeneous across deployment environments. Sampling methodologies developed for stable environments may not capture the dynamically changing components that represent the highest compliance risk. And conditions change during the sampling period, sometimes materially.

Incident-Driven Discovery

The practical consequence of periodic verification's limitations is that compliance failures are often discovered through incidents rather than through routine verification. A security incident reveals a configuration that should have been detected by access control monitoring. A regulatory inquiry surfaces transaction patterns that periodic reporting did not flag. A customer complaint triggers an investigation that exposes a gap between stated and operational data handling practices.

Incident-driven discovery is the most expensive form of compliance verification. The cost is not only the direct cost of remediation and potential penalties. The cost includes the cumulative undetected risk during the interval between the original divergence and its discovery, the reputational exposure of a failure that became visible externally, and the credibility damage of a compliance program that was unable to detect its own failures.

Section 7: Organizational Incentives and Strategic Ambiguity

Compliance infrastructure that generates continuous evidence creates organizational exposure. Understanding this dynamic is necessary for designing systems that will actually be adopted and maintained rather than technically deployed and operationally circumvented.

The Liability Surface Problem

Evidence generation infrastructure produces records. Those records document compliance status - including periods of non-compliance, near-misses, and control failures that were remediated before external discovery. In adversarial contexts - regulatory investigations, litigation, enforcement proceedings - those records become discoverable artifacts.

Organizations that operate with periodic verification and selective documentation maintain a degree of ambiguity about their historical compliance posture. That ambiguity may have strategic value. Continuous evidence generation eliminates that ambiguity. It replaces plausible deniability with a documented record that includes failures.

This is not an argument against continuous evidence generation. It is an explanation for organizational resistance to it. Compliance architects must understand that they are proposing to eliminate strategic ambiguity, and that stakeholders who have benefited from that ambiguity may resist the architecture even when they cannot articulate the resistance in those terms.

The Incentive Misalignment

In many organizations, compliance programs are evaluated on their ability to produce clean audit outcomes rather than on their ability to accurately represent operational risk. A compliance program that generates continuous evidence and surfaces frequent control failures may appear less successful - by the metric of audit outcomes - than a program that generates periodic evidence and produces consistently clean reports, regardless of whether the reports accurately reflect operational behavior.

This incentive structure creates pressure toward evidence generation mechanisms that confirm compliance rather than mechanisms that accurately measure compliance status. Infrastructure that automatically surfaces liability may be opposed not because it fails technically but because it succeeds functionally in ways that conflict with organizational incentives.

Regulatory Interpretation and Enforcement Discretion

Regulatory agencies exercise enforcement discretion. The decision to investigate, the decision to pursue formal enforcement action, and the decision to impose specific remedies all involve discretionary judgment. In this environment, organizations operating in good faith under genuine interpretive uncertainty may prefer ambiguous evidence to unambiguous evidence of technical non-compliance.

Compliance architecture must acknowledge this reality. Designing infrastructure that generates continuous evidence without simultaneously building the interpretive and governance mechanisms to manage that evidence creates a compliance program that documents its own failures without the capacity to contextualize them. The evidence generation function and the evidence interpretation function must be co-designed.

Circular Validation

A distinct structural risk is circular validation: architectures where the entity generating evidence also controls the verification of that evidence. This is the compliance analog of the internal controls problem in financial auditing - self-assessment may be accurate, but it cannot provide independent assurance.

Circular validation risks are not always deliberate. They can emerge from expedient design choices: using the same infrastructure team to build both operational systems and the monitoring systems that observe them, or allowing the teams being assessed to define the metrics by which they are assessed. These arrangements produce evidence that is structurally predisposed to confirm compliance rather than to accurately measure it.

Independence between observation and enforcement is an architectural requirement, not merely a governance aspiration.

Section 8: Diagnostic Summary

The preceding analysis establishes a structured explanation for why compliance evidence often describes the past rather than the present.

Three Interacting Forces

Evidence latency and enforcement drift are not produced by a single failure. They emerge from the interaction of three forces that operate simultaneously.

Evidence generation delay. Operational signals describing system behavior are not always generated at the moment of behavior, captured with sufficient granularity, or preserved with adequate context to support compliance evaluation. Signal latency creates a gap between behavior and the evidence record.

Interpretive uncertainty. Even when evidence is generated, translating that evidence into a compliance judgment requires interpreting regulatory obligations, resolving ambiguous standards, and making contextual determinations that cannot be fully automated. Semantic latency is the delay produced by this interpretive process. It is irreducible to zero, but it can be architecturally managed.

Continuous system change. Operational systems change continuously. Each change event is a potential divergence point. The combination of evidence generation delay and interpretive uncertainty means that changes accumulate undetected. The aggregate divergence during an unverified interval may substantially exceed the divergence visible at any single change event.

What the Verification System Is Actually Measuring

A compliance verification system operating under these conditions is not measuring current operational behavior. It is measuring historical operational behavior, filtered through an evidence collection process that captures an incomplete sample of signals, interpreted against standards that may have shifted since the evidence was generated, and evaluated at moments selected for their administrative convenience rather than their operational relevance.

The attestation artifacts produced by this system describe a system model that exists in the evidence record. They do not describe the system as it currently operates. The gap between the attested state and the operational state is enforcement drift.

Architectural Responses

The diagnostic establishes the requirements that a credible compliance verification architecture must address:

- Evidence must be generated continuously and embedded in system operation rather than collected retrospectively. Retrospective collection cannot close the gap between system change velocity and verification cadence.

- Evidence generation must be independent of the systems being observed. Circular validation architectures undermine the credibility of evidence regardless of the technical quality of the signals they produce.

- Semantic latency must be explicitly managed. The compliance architecture must distinguish between the availability of signals and the availability of compliance judgments. Evidence pipelines and interpretation mechanisms must be designed separately and their outputs tracked separately.

- Drift must be measured continuously relative to defined constraint baselines. Compliance status is not a property that can be verified periodically in high-change environments. It is a property that must be monitored as a continuous function.

- Evidence artifacts must be preserved with sufficient context to support retrospective reinterpretation. The interpretive regime in which evidence was produced must be documented alongside the evidence itself.

- Institutional incentives must be acknowledged in the architecture. Systems that generate continuous evidence create organizational exposure. The governance mechanisms that determine how that evidence is evaluated, by whom, and under what standards are as important as the technical mechanisms that generate it.

Bridge to the Oracle Problem

This chapter has established the operational mechanics that produce the trust gap described in the prior chapter. The trust gap - the structural condition under which stakeholders must rely on trust rather than direct verification - is not an abstract governance failure. It is the predictable output of compliance verification systems operating under evidence latency and enforcement drift.

The Oracle Problem completes the failure mechanics argument by identifying the irreducible limit that applies even to well-instrumented, continuously-evaluated systems. The Oracle Problem establishes why every verification architecture must terminate at a trust boundary - and how that boundary must be explicitly located, governed, and disclosed rather than obscured.