Control Assertion Engine

Converting Runtime System Behavior into Verifiable Compliance Evidence

The Evidence Gap in Control Verification

Compliance verification has a structural problem that predates digital infrastructure: the controls an organization implements and the evidence an auditor reviews are separated by time.

The conventional audit cycle operates as follows. A control is designed, documented, and deployed. The system then operates - for months, sometimes years - generating activity that the control is meant to govern. When an audit occurs, evidence is assembled after the fact. Logs are retrieved. Screenshots are captured. Attestations are signed. From this reconstructed record, an auditor attempts to infer whether the control functioned correctly throughout the intervening period.

This architecture has a name: retrospective compliance. And it fails in predictable ways.

Evidence gaps emerge wherever system activity occurs without contemporaneous observation. Documentation drift develops as the gap between what a system does and what its documentation claims it does widens over time. Control failures go undetected until an audit surfaces them - at which point the organization is managing a disclosure, not a control. And because auditors are working from partial artifacts rather than behavioral records, their conclusions are probabilistic. They are estimating control effectiveness, not measuring it.

The fundamental issue is not auditor incompetence or documentation negligence. The issue is architectural. A compliance model that generates evidence after behavior has occurred cannot produce a continuous behavioral record. It can only produce a narrative assembled from fragments.

The Control Assertion Engine addresses this gap at the infrastructure layer. Rather than treating evidence generation as a downstream activity that follows system operation, the CAE embeds evidence generation into the execution environment itself. Compliance evidence is produced at the moment system behavior occurs, evaluated against encoded constraints, and preserved in an immutable record available for inspection without reconstruction.

This chapter defines what a Control Assertion Engine is, what it does, what it cannot do, and what is required to build and operate one.

From Monitoring to Assertion

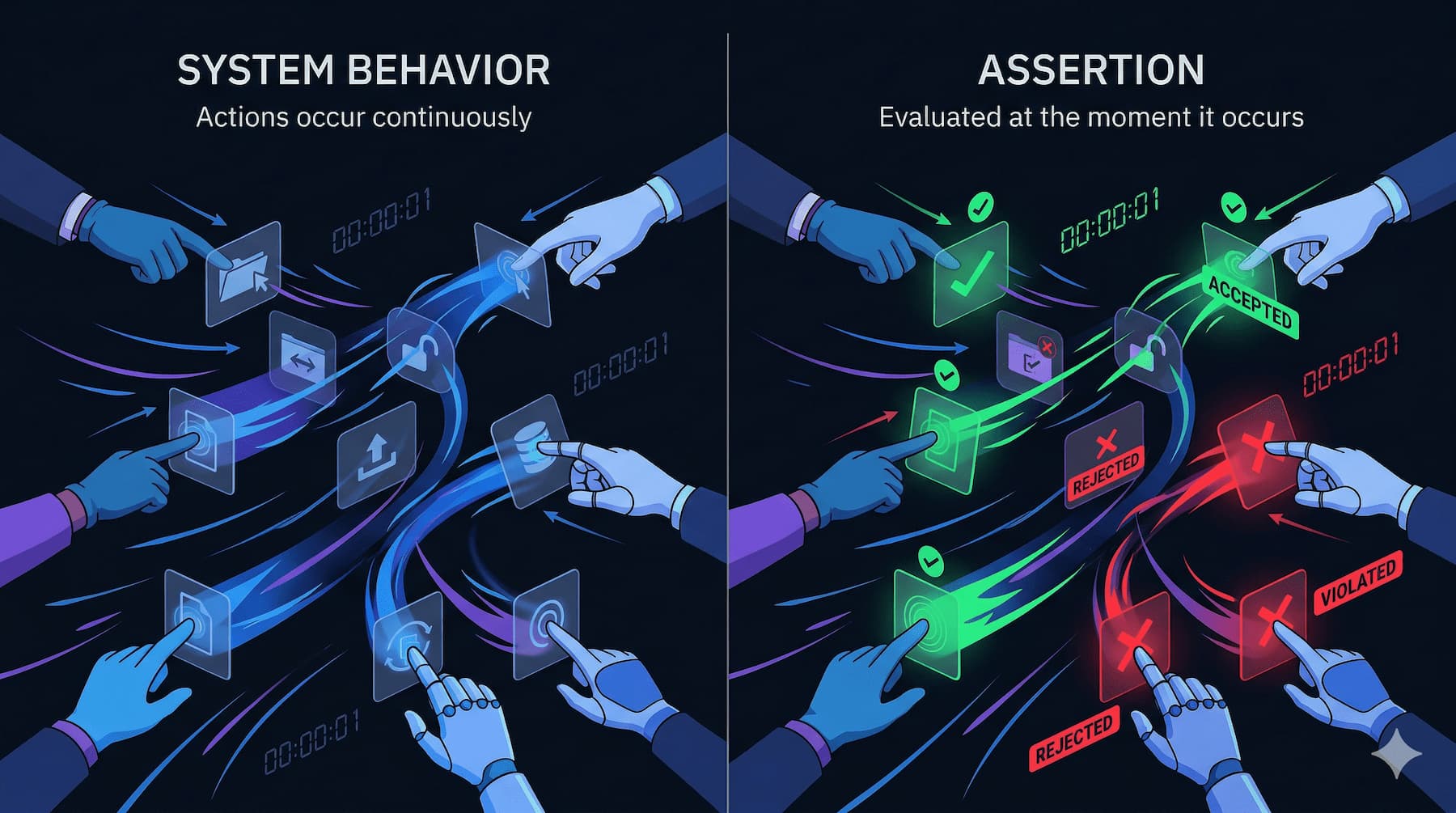

Before defining the CAE, a critical distinction must be established: monitoring is not assertion.

Monitoring generates telemetry. It observes system behavior and records it. A monitoring system can tell you that an API call occurred, that a database transaction completed, that a configuration changed. Monitoring is necessary infrastructure. It is not sufficient for compliance evidence.

Assertion requires an additional step. An assertion is produced when observed behavior is evaluated against an encoded compliance constraint and a structured result is generated describing whether the constraint was satisfied at the moment of evaluation.

The difference is precise:

Monitoring says: An event occurred.

Assertion says: An event occurred, it was evaluated against constraint X, and the result of that evaluation at time T was [satisfied / violated / indeterminate].

A system with comprehensive monitoring but no assertion engine produces logs. Logs are raw material. They can be used to reconstruct behavior, but reconstruction introduces the same evidence latency that characterizes traditional audits. Logs must be interpreted, correlated, and assembled - activities that occur after the fact and are subject to error, selection bias, and incompleteness.

The CAE transforms monitoring infrastructure into compliance evidence infrastructure by inserting constraint evaluation between telemetry collection and evidence storage. The output of the evaluation - the assertion - is a structured, timestamped, machine-generated statement that a specific constraint was satisfied or violated by a specific event at a specific moment. This is qualitatively different from a log entry.

Organizations that believe they have assertion infrastructure because they have comprehensive logging have mischaracterized their compliance posture. Logging is a precondition for assertion. It is not a substitute.

What a Control Assertion Engine Actually Does

A Control Assertion Engine is an infrastructure component that evaluates runtime system behavior against encoded compliance constraints and produces structured assertions describing whether those constraints were satisfied at the moment of execution.

This definition has three load-bearing elements that must be held separately.

Evidence in traditional systems is observed after the fact. In CAI, evidence is produced at execution.

Runtime evaluation. The CAE operates during system execution, not after it. Constraint evaluation occurs as events are generated, not when logs are retrieved. This is what makes runtime evidence structurally different from reconstructed evidence. The temporal gap between behavior and observation is minimized by design.

Encoded compliance constraints. The CAE evaluates behavior against formally defined constraints - machine-readable representations of compliance requirements derived from regulatory interpretation, policy translation, and legal-engineering interface layers upstream in the compliance infrastructure stack. The CAE does not interpret regulations. It evaluates against constraints that prior infrastructure layers have already translated into evaluable form.

Structured assertions. The output of the CAE is not a log entry and not a report. It is a structured assertion object: a machine-generated record containing the constraint identifier, the event identifier, the evaluation result, a timestamp, the evaluation context, and optionally a confidence score. Assertions are the unit of compliance evidence this infrastructure produces.

The CAE transforms compliance from retrospective interpretation into real-time evaluation, where behavior is assessed and recorded at the moment it occurs.

What the CAE does not do is equally important to specify.

The CAE does not verify that a control is effective in the real world. It produces verifiable computational evidence about observable system behavior. These are different claims. An assertion that constraint X was satisfied by event Y at time T is a statement about observed computational behavior. It is not a statement that the underlying regulatory objective was achieved. Verification - the determination that a control achieves its regulatory objective - may require human review, external evidence, physical controls, and policy interpretation. The CAE performs assertion. Verification is a broader process of which assertion is one input.

The CAE also does not operate across the full spectrum of compliance controls. Controls fall into three categories relative to CAE scope:

Computational controls are fully observable by infrastructure. Access control evaluations, transaction validation, configuration enforcement, cryptographic operations - these generate observable events that the CAE can evaluate completely. Assertion coverage for computational controls can approach completeness.

Hybrid controls combine computational and non-computational components. A control that requires both system-enforced access restrictions and human approval workflows is partially observable by infrastructure. The CAE can assert on the computational components. Human activities require separate validation mechanisms. Assertions for hybrid controls are partial by definition and must be characterized as such.

Non-computational controls are outside CAE scope. Physical access controls, in-person procedures, interpersonal governance activities - these do not generate computational events that the engine can evaluate. Attempting to assert on non-computational controls through proxy metrics introduces the compliance theater failure mode discussed later in this chapter.

Scope definition is not a limitation to be engineered around. It is a design constraint to be enforced. An assertion engine that claims scope it cannot substantiate produces evidence that appears credible but is not. This is worse than acknowledged evidence gaps.

Runtime Constraint Evaluation

The CAE exists within a compliance evaluation pipeline. Understanding how constraint evaluation operates requires mapping the components of that pipeline and the data flows between them.

Constraint Definitions

The engine evaluates against constraint definitions: formally encoded representations of compliance logic. These definitions are the downstream product of upstream infrastructure layers - regulatory interpretation, constraint translation, and the legal-engineering interface. The CAE is a consumer of these definitions, not a producer.

Constraints define allowed behaviors, prohibited behaviors, and conditional requirements. They may be deterministic rules - an action is permitted or it is not - or probabilistic thresholds that characterize acceptable ranges of system behavior, or context-sensitive conditions that vary based on system state. The encoding of these distinctions is a constraint design problem, not a CAE design problem, but the CAE must be capable of evaluating all three forms.

Telemetry Sources

The CAE operates on operational system events: API calls, database transactions, identity events, infrastructure state changes, configuration updates, deployment events. These form the behavioral record against which constraints are evaluated.

Telemetry must satisfy three integrity properties to be usable as the basis for compliance assertions: it must be authenticated (its source must be verifiable), tamper-resistant (it must not be modifiable in transit or at rest before evaluation), and timestamped (the temporal ordering of events must be reliable). Telemetry that does not satisfy these properties cannot support assertions that will survive scrutiny. Evidence built on unauthenticated or tamper-susceptible telemetry is structurally unreliable regardless of the quality of the evaluation layer.

Evaluation Modes

Constraint evaluation occurs in two modes depending on the control architecture.

Synchronous evaluation occurs within the execution path. The system cannot complete an action until constraint evaluation has occurred and produced a result. Access authorization is the canonical example: a request is evaluated against access control constraints before it is permitted. Synchronous evaluation provides the tightest coupling between behavior and assertion but introduces latency into execution paths and creates availability dependencies - if the evaluation system is unavailable, the operational system cannot complete the governed action.

Asynchronous evaluation occurs through event streams separate from the operational execution path. Events are emitted, captured by the evaluation pipeline, and evaluated on a continuous basis. Log processing, pipeline validation, and policy analysis typically operate in asynchronous mode. Asynchronous evaluation introduces an assertion lag - the temporal gap between when an event occurs and when an assertion is generated - but removes the CAE from the operational execution path, reducing the availability and latency impact on production systems.

The choice between synchronous and asynchronous evaluation is an architectural decision that depends on the control type, the latency tolerance of the operational system, and the acceptable assertion lag for the compliance requirement in question. Many production CAE implementations use both modes: synchronous evaluation for controls where immediate enforcement is required, asynchronous evaluation for controls where continuous evidence generation is sufficient.

Assertion Generation and Evidence Production

When a constraint evaluation completes, the engine generates an assertion object. The assertion is the atomic unit of compliance evidence produced by this infrastructure.

A complete assertion object contains:

- Constraint identifier: a reference to the specific encoded constraint that was evaluated

- Event identifier: a reference to the specific system event that triggered evaluation

- Evaluation result: the outcome of the evaluation - satisfied, violated, or indeterminate

- Timestamp: a reliable, tamper-resistant record of when the evaluation occurred

- Evaluation context: the state information used during evaluation, sufficient to allow the assertion to be independently understood and reviewed

- Confidence score (where applicable): a representation of the certainty of the evaluation result, relevant for probabilistic or context-sensitive constraints

Each element of the assertion object serves a specific evidential function. The constraint identifier establishes what rule was applied. The event identifier establishes what behavior was observed. The result is the evaluative claim. The timestamp establishes the temporal ordering of compliance events. The context allows the assertion to be interpreted without reconstructing system state. The confidence score, where present, characterizes the epistemic quality of the evaluation.

Assertions are stored in immutable evidence stores that are append-only, tamper-evident, cryptographically verifiable, and time-ordered. The integrity of the evidence store is not optional architecture. If assertion records can be modified after generation, the evidentiary value of the assertion collapses. An audit of a mutable evidence store is an audit of what someone chose to preserve, not of what the system observed. The CAE produces evidence. The evidence store preserves it. Both must be designed with integrity as a foundational constraint, not an afterthought.

Downstream of the evidence store, the Attestation Layer - a distinct infrastructure component addressed in a subsequent chapter - aggregates assertions, produces compliance statements, and exposes evidence to auditors and regulators. The CAE generates raw evidence. The Attestation Layer surfaces that evidence in forms usable for external verification. This separation of concerns is architecturally intentional. Conflating assertion generation with attestation production creates a system where the entity producing evidence is also shaping how that evidence is presented - a structural conflict of interest that undermines the integrity of both functions.

Trust and Integrity of the Assertion System

The CAE introduces a recursive trust problem that must be addressed explicitly.

If compliance assertions are generated by an infrastructure system, then the trustworthiness of those assertions depends on the trustworthiness of the infrastructure system itself. An assertion engine that has been compromised, misconfigured, or manipulated produces assertions that are formally structured but evidentially unreliable. The audit of an assertion system must answer: who verified the verifier?

This is not a theoretical concern. It is a structural vulnerability that any adversarially sophisticated actor - a malicious insider, an external attacker who has achieved persistent access, or an organization attempting to manipulate its own compliance record - would be motivated to exploit. If an attacker can control the CAE, the CAE will assert compliance regardless of actual system behavior.

Several architectural mechanisms address recursive trust risk.

Out-of-band integrity anchors. The CAE must depend on integrity anchors that exist outside the monitored system. If the CAE validates its own integrity using components of the system it monitors, a compromise of that system may also compromise the integrity verification. Mechanisms that provide out-of-band anchors include hardware roots of trust, external log attestation services, and independent telemetry channels that the CAE operator does not control. The operational complexity of maintaining out-of-band anchors is significant. It is also non-negotiable for CAE deployments where the integrity of compliance evidence is under adversarial pressure.

Immutable logging with external attestation. Evidence stores protected by cryptographic mechanisms - Merkle-tree evidence chains, for example - allow any party to verify that a record has not been modified since it was generated. External attestation anchors, where an independent party co-signs assertion records, provide an additional integrity layer that does not depend on the trustworthiness of the assertion-generating organization.

Independent auditing of the CAE itself. The constraint definitions encoded in the CAE, the evaluation logic, and the assertion generation mechanisms are themselves auditable artifacts. Constraint versioning, evaluation logic review, and assertion format validation allow an independent party to verify that the CAE is doing what it claims to be doing. This does not fully resolve the recursive trust problem - an auditor of the CAE is still dependent on the integrity of the audit process - but it establishes an independent review layer that reduces (though does not eliminate) the risk of undetected manipulation.

Telemetry authentication and tamper resistance. Telemetry manipulation is a precondition for most CAE integrity attacks. If an actor can modify what events the CAE observes, the actor can control what the CAE asserts without touching the evaluation logic. Authenticated, tamper-resistant telemetry collection is therefore an integrity requirement for the assertion system, not merely a data quality consideration.

The epistemic question this architecture is navigating is not resolvable by technical means alone: when can a machine-generated assertion be trusted as evidence of compliance? The answer depends on the integrity of the evaluation chain - from telemetry authentication through constraint encoding through evaluation logic through evidence storage through attestation - and on the independence of the integrity anchors at each stage. A CAE that satisfies all of these conditions produces evidence that is technically credible. Whether that evidence is legally admissible and sufficient for regulatory purposes is a separate question, addressed in the legal and governance considerations below.

Performance, Scale, and Event Infrastructure

Deploying a CAE at production scale involves engineering challenges that are distinct from the conceptual architecture. Organizations that underestimate these challenges deploy systems that are architecturally sound in design and operationally unreliable in practice.

Event infrastructure requirements. The CAE requires a reliable event pipeline capable of ingesting the full volume of telemetry from instrumented systems. This requires event streaming infrastructure with message durability, ordering guarantees, and distributed ingestion capacity sufficient to handle peak operational load without dropping events. Dropped events produce assertion gaps. Assertion gaps are evidence gaps. An evidence gap is a compliance gap until demonstrated otherwise.

Constraint execution at scale. Policy evaluation at the volume of production system telemetry is computationally intensive. The evaluation system must support large-scale rule evaluation, context-aware analysis, and streaming evaluation across the full constraint definition set. Technologies that implement these capabilities include policy engines, rule engines, and stream processors. The specific technology selection depends on constraint complexity, evaluation volume, latency requirements, and integration with existing infrastructure. What matters architecturally is that the evaluation system must be capable of evaluating every in-scope event without degradation of assertion quality under load.

The state explosion problem. As the number of monitored systems, encoded constraints, and telemetry sources grows, the volume of assertion work grows combinatorially. An organization with ten systems, fifty constraints, and ten event types per system generates assertion work at a scale that is tractable. An organization with five hundred systems, two thousand constraints, and variable event types per system faces a state explosion that, without architectural mitigation, will exceed the computational capacity of any single evaluation system.

Architectural responses to state explosion include event filtering (evaluating only events relevant to defined constraints rather than all events), hierarchical evaluation (delegating constraint evaluation to local components and aggregating results centrally), sampling strategies (evaluating a statistically valid sample of events for constraints where sampling is analytically defensible), and constraint scoping (defining explicit boundaries for each constraint so evaluation is not applied across the full system surface indiscriminately). Each of these mitigations involves tradeoffs: filtering may miss relevant events, sampling introduces statistical uncertainty, hierarchical evaluation introduces aggregation complexity. These tradeoffs must be characterized and disclosed rather than obscured.

Assertion latency. Every evaluation takes time. In synchronous evaluation mode, assertion latency directly affects operational latency. In asynchronous mode, assertion latency determines how quickly compliance violations are detected and recorded. Organizations must define acceptable assertion latency bounds for each constraint class and design evaluation infrastructure to meet those bounds under production load.

Failure Modes and Misuse

The CAE is a system with failure modes. Characterizing those failure modes is a prerequisite for operating the system responsibly.

Monitoring-assertion confusion. The most common mischaracterization of CAE infrastructure is treating comprehensive monitoring as equivalent to assertion capability. Organizations that have deployed logging and observability infrastructure, and that describe this infrastructure as a compliance assertion system, have not built a CAE. They have built the precondition for a CAE. The distinction matters operationally because monitoring infrastructure does not produce structured assertions, does not evaluate behavior against encoded constraints, and does not generate an immutable evidence record. Compliance posture described on the basis of monitoring infrastructure is overstated.

Metric gaming. A compliance system that evaluates against defined metrics will, over time, produce pressure to optimize those metrics rather than the underlying compliance objectives they represent. This is not a pathology specific to the CAE - it is Goodhart's Law applied to compliance infrastructure. When a measure becomes a target, it ceases to be a good measure. The CAE makes metric gaming technically straightforward: an organization that understands its constraint definitions can engineer system behavior to satisfy those constraints without achieving the regulatory objectives those constraints represent.

The CAE must be designed to detect metric gaming, not merely to record assertion results. Observable signals of gaming include sudden metric optimization following constraint publication, sustained "perfect compliance" across a constraint set that would be expected to produce some violation rate, and anomalously uniform outcomes across a large and diverse system surface. These signals do not constitute proof of gaming, but they constitute evidence that warrants investigation. The evaluation layer should include anomaly detection capabilities designed specifically to surface these patterns.

Over-automation. The CAE can assert on computational controls. It cannot assert fully on hybrid controls and cannot assert at all on non-computational controls. An organization that extends CAE scope beyond computational controls - or that asserts complete compliance coverage based on CAE output alone - has mischaracterized its compliance posture. Over-automation produces an appearance of comprehensive coverage that conceals actual gaps. This failure mode is particularly dangerous because the outputs of the CAE look identical regardless of whether the underlying scope characterization is accurate.

Trust collapse. If the integrity of the CAE is compromised - whether through telemetry manipulation, constraint version mismatch, evaluation logic failure, evidence store tampering, or assertion engine outage - the evidence it produces is unreliable. An organization that has built its compliance verification architecture on CAE output and then experiences a trust collapse event faces a situation where its entire compliance record is of uncertain integrity. The response to this failure mode is not primarily technical. It is architectural: the CAE must be designed with the assumption that trust collapse is a possibility, and the compliance infrastructure must include mechanisms for detecting it and characterizing its scope.

Fail-open versus fail-closed behavior. When the CAE is unavailable or degraded, operational systems face a design decision: should they continue operating in the absence of constraint evaluation (fail-open), or should they halt until evaluation is restored (fail-closed)? Neither answer is universally correct. Fail-open preserves operational continuity but allows unasserted events to occur, producing evidence gaps. Fail-closed preserves evidence completeness but introduces operational availability dependencies. The appropriate choice depends on the criticality of the constraint, the risk tolerance of the organization, and the regulatory context. This decision must be made explicitly for each constraint class and documented as part of the CAE's operational design.

Observable Signals of Assertion System Health

An assertion infrastructure that cannot be monitored for its own health cannot be trusted to produce reliable evidence. The following signals characterize CAE operational state.

Healthy signals include assertions generated for all defined constraints, event coverage approaching the theoretical maximum for relevant telemetry, stable assertion latency within defined bounds, immutable evidence chain integrity verified continuously, and low rates of unexplained assertion gaps.

Warning signals include assertion volume collapse (fewer assertions being generated than expected given telemetry volume), telemetry ingestion gaps (events entering the system but not reaching evaluation), constraint evaluation failures (evaluation logic producing errors rather than results), delayed assertion generation (latency exceeding defined bounds), and large volumes of indeterminate state assertions.

Failure signals include assertion engine outages (evaluation infrastructure unavailable), corrupted evidence store (stored assertions failing integrity verification), constraint version mismatches (evaluation occurring against outdated or incorrect constraint definitions), tamper detection events (evidence of unauthorized modification of telemetry or assertion records), and unverifiable timestamps (temporal ordering of assertions cannot be established reliably).

Behavioral signals indicating potential metric gaming include sudden optimization of assertion pass rates following constraint publication, sustained perfect compliance outcomes across constraint sets where some violation rate is analytically expected, and anomalously uniform assertion results across heterogeneous systems.

The operational team responsible for CAE infrastructure should treat these signals as first-class observability outputs, monitored with the same rigor as production system health signals. A degraded CAE is a degraded compliance posture, and the degradation may not be visible in operational dashboards if CAE health is not independently monitored.

Why Continuous Evidence Changes Compliance

The CAE's contribution to compliance infrastructure is not incremental. It is structural.

Traditional compliance generates evidence by reconstruction. The CAE generates evidence by observation. This difference changes what compliance verification can accomplish.

Reconstruction-based evidence can establish that a control existed and was configured. It can provide samples of behavior that suggest the control functioned. It cannot establish that the control functioned continuously, that no violations occurred between sampled observations, or that the evidence record is complete rather than selectively assembled.

Observation-based evidence - evidence produced by runtime assertion at the moment behavior occurs - can establish behavioral continuity. It can establish that every in-scope event was evaluated. It can establish that violations were detected and recorded as they occurred rather than discovered through retrospective analysis. It provides the foundation for compliance verification that is behavioral rather than documentary.

This is not an incremental improvement in audit quality. It is a change in what compliance verification is capable of claiming. An organization with functioning CAE infrastructure can demonstrate continuous constraint evaluation across its in-scope control surface. An organization without it can demonstrate that its documentation was accurate at the time it was produced.

The practical consequence is that regulatory requirements increasingly oriented toward demonstrating continuous control effectiveness cannot be satisfied by documentation-based compliance models. The CAE is not a better way to manage documentation. It is the infrastructure component that makes continuous behavioral evidence technically feasible.

Transition to Attestation

The CAE produces raw compliance evidence. This evidence is the input to the next layer of the compliance infrastructure stack: the Attestation Layer.

Upstream of the CAE, regulatory interpretation, constraint lifecycle management, constraint translation, and system instrumentation layers produce the machine-readable constraint definitions and authenticated telemetry that the CAE evaluates. These layers are responsible for ensuring that what the CAE evaluates corresponds to what regulators require.

The CAE evaluates and asserts. It converts system activity into structured compliance evidence. It does not aggregate evidence into compliance statements, expose evidence to auditors, or produce the external-facing representations of compliance posture that regulators and auditors consume. Those functions belong to the Attestation Layer.

This separation of concerns is load-bearing. An infrastructure component that both generates assertions and produces attestations - that is both the source and the reporter of compliance evidence - is a system with a structural conflict of interest and insufficient separation between evidence generation and evidence presentation. The compliance infrastructure model maintains this separation explicitly: the CAE generates, the Attestation Layer aggregates and exposes.

For organizations building compliance infrastructure, the operational implication is that CAE deployment is necessary but not sufficient. The evidence produced by the CAE must flow into downstream infrastructure capable of aggregating it, structuring it for regulatory consumption, and exposing it for external verification. The CAE section of the compliance infrastructure build is followed immediately by the attestation section. Neither is complete without the other.

Unresolved Questions and Governance Considerations

Several questions in CAE deployment remain active governance problems without architecturally complete answers.

Legal admissibility. Machine-generated assertions represent a category of evidence that regulatory and legal frameworks are still characterizing. Whether assertion records constitute legally admissible evidence of compliance, how they interact with existing evidentiary standards, and what retention and governance obligations apply to assertion archives are questions that organizations must resolve in coordination with legal counsel and regulators. The CAE produces evidence of a form that compliance frameworks have not fully addressed. Organizations deploying this infrastructure must engage with regulators proactively rather than assuming admissibility.

Constraint ownership and versioning. Constraint definitions are the normative inputs to the evaluation engine. Changes to constraint definitions change what behaviors are characterized as compliant. Who owns constraint definitions, who can modify them, and how modifications are versioned and disclosed are governance questions with significant compliance implications. A constraint change that reclassifies previously non-compliant behavior as compliant is a governance event of the same order as a policy change. Constraint lifecycle governance must be treated with commensurate seriousness.

Hybrid control handling. The architecture characterizes hybrid controls as partially assertable. The operational question of how to handle the non-assertable portions - how to integrate human validation into an evidence record that is otherwise machine-generated, how to characterize partial assertion coverage in compliance reporting, and how to prevent the assertable portions of hybrid controls from being reported as equivalent to complete coverage - does not have a standardized answer. Organizations must define their own governance policies for hybrid control handling and document those policies as part of their compliance infrastructure disclosure.

Compliance theater through automation. The CAE creates conditions under which an organization can generate voluminous, formally structured compliance evidence while operating controls that do not achieve their regulatory objectives. This is compliance theater at infrastructure scale. The prevention of this failure mode is an organizational governance problem more than a technical one. It requires that constraint definitions remain anchored to regulatory objectives, that constraint coverage is reviewed for scope accuracy, and that behavioral signals of metric gaming are treated as material compliance concerns rather than technical anomalies. The technical infrastructure can surface these signals. Acting on them requires organizational governance that is not provided by the infrastructure itself.

The Control Assertion Engine converts runtime system behavior into verifiable compliance evidence. It is the component in the compliance infrastructure stack that closes the observation gap between control deployment and evidence generation. Its architectural integrity - the authenticity of its telemetry, the accuracy of its constraint encoding, the immutability of its evidence store, and the independence of its integrity anchors - determines the reliability of the compliance evidence that downstream infrastructure aggregates and reports. Understanding what the CAE does, what it cannot do, and what is required to operate it with integrity is foundational to deploying compliance infrastructure that produces evidence rather than documentation.